Where we came from

Image courtesy of xkcd.com

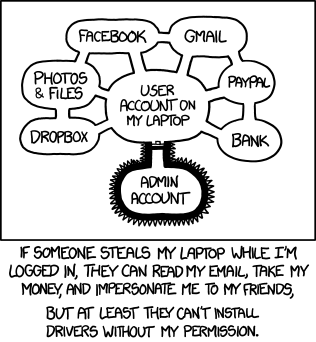

Around a decade ago operating systems typically had two privilege levels – a user and an administrator. The administrator would set up the system and install applications for the user, who then had little permission to modify the system themselves. Different users could share a system, meaning each user and their applications would be unable to interfere with another user’s applications and data.

However, this model doesn’t stop a user’s applications from interfering with other applications and data owned by the same user. Remarkably effective worms such as Melissa and Love letter in the 1990s and early 2000s spread by extracting all the user’s contacts from the user’s address book application, and then using the user’s mail application to send copies of the worm to those contacts.

The modern privilege model

It was clear that the user/administrator model had weaknesses, and so technologies like sandboxing began to appear. Platforms that incorporate sandboxing create a limited number of well-defined ways for applications to interact with each other, many of which prompt the user for permission for these interactions.

A Melissa equivalent on a modern platform would have to ask the user for permission to read their contact list, and then ask the user again for permission to send the email; it would be unable to send the email itself without the user’s explicit involvement and agreement.

Actually, the above paragraph is only true if there are no vulnerabilities in the platform which allow privilege escalation. A jailbreak (called rooting on some platforms) is simply a vulnerability in the platform which has been exploited in a controlled way to give some code extra permissions. Normally this is to allow the user to have extra control over their device – so they can customise it more, or install applications which aren’t otherwise available in app stores.

However, in order to achieve this, a jailbreak will normally disable or weaken some security features, such as sandboxing. Application signing may also be disabled to enable the user to install any application of their choosing. All this makes it much easier for malware to gain a presence on the device, as has been shown by code such as Unflod and Ikee.

This is clearly bad from a security point of view, so device administrators may want to detect if this is happening on their organisation’s devices. Many Mobile Device Managers (MDMs) today claim to detect jailbreaks. They generally do this by attempting to detect the artefacts of the jailbreak, rather than the actual exploitation of the vulnerability which was used to escalate privilege of the jailbreak code.

For example, a jailbreak detection tool may look for the presence of a third-party application store (e.g. Cydia). It may check to see if it can execute unsigned code. It may look to see if additional libraries are loaded into its own process space. The key point is that it can only look for a limited number of things that it knows to look for, and also has permission to look for.

The malware threat

A jailbreak is usually a benign payload attached to an exploit for a vulnerability in the platform. Vulnerabilities allowing privilege escalation on modern platforms are thankfully rare thanks to the excellent work of security teams across the major product vendors. However, when a jailbreak contains such an exploit, malware authors are able to take that exploit and repackage it with a more malicious payload. Given this opportunity, they can install their own code onto a platform with high privileges that doesn’t modify the underlying platform in the same way as the jailbreak. By doing this, malware is able to evade jailbreak detection software in MDMs.

So can anti-malware software on modern devices help? The irony of attempting to scan for malware on a sandboxed platform is that, without additional permissions, the scanning application is itself limited by the sandbox and code signing that attempt to keep the platform secure. Techniques on older platforms (such as checking apps before they run, scanning the entire storage system, and reviewing what applications are present) may all be hard, if not impossible, for anti-malware tools to achieve on a modern platform.

Does jailbreak detection work?

Jailbreak detection can help identify where the user has deliberately altered the security of their device -- assuming jailbreak authors don’t begin routinely evading jailbreak detection. The user could, however, jailbreak their own device and then install an application such as xCon which disables the jailbreak detection routines if they wanted to.

What jailbreak detection cannot detect is the presence of the original exploit(s) which permitted the privilege escalation in the first place. No third-party product can possibly do this. These inherent vulnerabilities and potential exploits can only be fixed through the installation of security updates from the product vendors themselves.

So what can I do?

Jailbreak detection is useful, but don’t rely on it exclusively. Instead, it is extremely important to have a rapid-response patching policy. Users should be able to update their devices as soon as updates are available. Devices, whose manufacturers support the platform installed on their devices for the device’s lifetime, should be preferred. In fact, one of CESG’s twelve principles to think about when choosing device is exactly this – Device update policy.

Key points

- Jailbreak detection identifies the artefacts of jailbreaking and can be subverted if the jailbreak author chooses to.

- Malware can leverage the exploits present in a jailbreak, either by using the same exploit directly, or by taking advantage of weakened security on an already-jailbroken platform.

- It makes sense to enable jailbreak detection via your MDM, but be aware of the weaknesses of it and don’t solely rely on it.

- Keeping devices patched and ensuring users understand why jailbreaking their devices is a bad idea, are arguably more important steps.